There is a fundamental disconnect in our data stacks. We have accepted a world where the database engine (like BigQuery) is entirely separated from the business logic (semantic layer).

We spend millions building perfect semantic models in Looker, dbt, or Power BI, only to watch that logic evaporate the second an engineer opens a Jupyter Notebook or a raw SQL console. We get back to guessing which id joins where, and re-calculating "Net Revenue" for the thousandth time. This creates silos. It replicates logic. And eventually, the numbers are not matching.

Our “Single Source of Truth” is a lie if it only exists inside a dashboard tool.

This brings us to a fascinating piece of research from the Google team Semantic Data Modeling, Graph Query, and SQL, Together at Last? (CIDR 26).

The paper suggests something radical: Semantic models are just graphs. Instead of building a layer on top of the database, why not move the logic into the engine? If the engine understands the graph natively, the Semantic Layer stops being a separate SaaS product and becomes a database primitive.

As a critical optimist, I find the technical elegance of this approach massive. However, while the engine is ready for this shift, our organizational structures are probably not. Moving logic into raw tables can creates governance risks.

In this article, I will detail the features introduced in this research and, crucially, propose a standalone Semantic Graph Object, the missing architectural link needed to make this reality survive the messy world of enterprise data.

Disclaimer: Please note that this post explores theoretical concepts and does not reflect an official Google Cloud or BigQuery product roadmap. To make these abstract research concepts easier to digest, I am projecting them onto BigQuery as a writing exercise to illustrate how a native semantic layer might look in a real-world enterprise environment.

Summary

I. The Paper’s Promise

I.1 Virtual Columns

I.2 Join Columns

I.3 Horizontal Aggregation

I.4 Measure Columns

I.5 Multi-Modal Optimization

II. Architectural Gap & Limitations

II.1 The Clean Data Fallacy

II.2 Topology is not Semantics

II.3 The Return of Snippet Engineering

II.4 The Monolith of Compromise

III. The Architectural Solution

III.1 Object Definition: Artifact over Patching

III.2 Solving the Architectural Traps

III.3 The Interface for the Agentic Ecosystem

IV. Conclusion

The Paper‘s Promise

Every time we write a JOIN clause, we are violating the DRY (Don't Repeat Yourself) principle. The relationship between Orders and Customers does not change, yet we ask every analyst to redefine that relationship in every single script.

The center of the universe shouldn’t be the Fact Table, it should be wherever our question starts.

The Google paper proposes a shift from legacy “Fact-to-Dimension” hierarchies toward a Semantic Data Graph. By treating tables as nodes and foreign keys as edges, the database engine finally understands the topology of our business. Now stop asking the consumer to be the Architect. The relationship belongs in the schema, not the query.

Relationships are bidirectional. In a Semantic Graph, the Customer node knows about its Orders just as much as an Order knows its Customer. We can start our analysis from any node in the network and walk in any direction.

Let me highlight the keys features of this paper.

Virtual Columns

Standard SQL objects forces a choice: store a calculated value in a table (costly storage) or define it in a view (often disconnected). Virtual Columns allow us to encapsulate business logic directly in the table schema. They are calculated at query time (Lazy Evaluation), meaning we can add hundreds of them without increasing storage costs.

Join Columns

This is the killer feature! By defining Join Columns , the relationship is baked into the column itself. Instead of writing JOIN...ON repeatedly, we use dot-notation to walk the graph.

This only requires a standard DDL to build the relationship between two tables.

Now customer is a new STRUCT virtual column inside the orders table following the defined relationship. And inversely, orders is a new ARRAY<STRUCT> virtual column in customer table. Instead of writing JOIN...ON, we can simply “walk” the relationship.

Horizontal Aggregation

Standard SQL struggles with arrays or multi-hop relationships. Usually, we need a subquery or a complex join that risks a cross-product (fan-out). Horizontal Aggregation allows us to aggregate across graph edges directly within the SELECT statement using UNNEST(path).

Measure Columns

The most dangerous part of SQL is the Fan-out Bug, where joining a 1:N relationship causes our sums to double or triple-count. Google’s solution is the MEASURE type. These are grain-locked to their source table. No matter how many tables we join downstream, the engine intelligently deduplicates the rows before summing.

Multi-Modal Optimization

Unlike external semantic layers (Looker/dbt) that generate complex SQL text, this native approach bakes the logic into the query optimizer. The engine understands the graph topology, enabling optimizations like Smart Join Elimination and Lazy Evaluation.

These queries are performing at least as well as queries generated from an disconnected semantic layer tool.

Also, it supports both SQL and GQL, Graph Query Language (even if not yet released in a public version), allowing us to traverse relationships using the syntax that best fits the problem.

Architectural Gap & Limitations

While the technical primitives introduced by the Google team are revolutionary, there remains a vast distance between “engine capabilities” and “enterprise architecture.”

When we step away from the environment of research papers and apply this to a production Data Warehouse, we meets the friction of the real world.

The Clean Data Fallacy

The paper relies on the elegance of clean relationships, but real-world data engineering is rarely a series of perfect Primary Key to Foreign Key matches. We face at least three messy patterns that the current proposal leaves in a state of ambiguity:

Note: It is important to remember that this is a research paper, not customer documentation. While the authors claim the engine can handle these complex messy data scenarios, the paper does not explicitly detail the syntax or implementation strategy for doing so. Until we see the code, the “how” remains ambiguous.

Complex Joins: Real-world joins are rarely clean

PK = FKmatches. They often involveCOALESCE,REGEXmatching, or time-window logic. While the engine allows arbitrary subqueries in edge definitions, this shifts massive complexity into the DDL, turning the schema definition into a repository of complex logic.Nested Structures (The Array Reality): BigQuery is built on nested fields (

ARRAY<STRUCT>). A foreign key often lives inside a nested array (e.g.,orders.items[].product_id) rather than at the root level. To create a graph edge here without flattening the table is technically difficult. Does the edge point from the order? Or from the specific item?Lateral Virtual Dependencies: A specific challenge arises when a virtual column requires data from two different tables. For example, calculating

net_marginon an Orders table might requireOrders.price(local) minusProducts.cost(remote table). This creates a dependency chain that is difficult to manage via simple DDL.

Note: How do we manage

N:Nrelationships?

Topology is not Semantics

The paper treats “Semantics” primarily as a synonym for “Relationships.” This is probably the main disagreement I have with the paper.

A foreign key is a technical instruction, it defines the topology (the path). A semantic edge is a business contract, it defines the intent (the why).

By focusing purely on the joins and on additional columns, this approach is blind to context and lacks the extensibility needed for governance.

Without an additional layer to attach rich metadata (glossaries, ownership, synonyms, or golden queries) the graph risks becoming just a faster way to generate technically perfect but contextually wrong answers.

The Return of Snippet Engineering

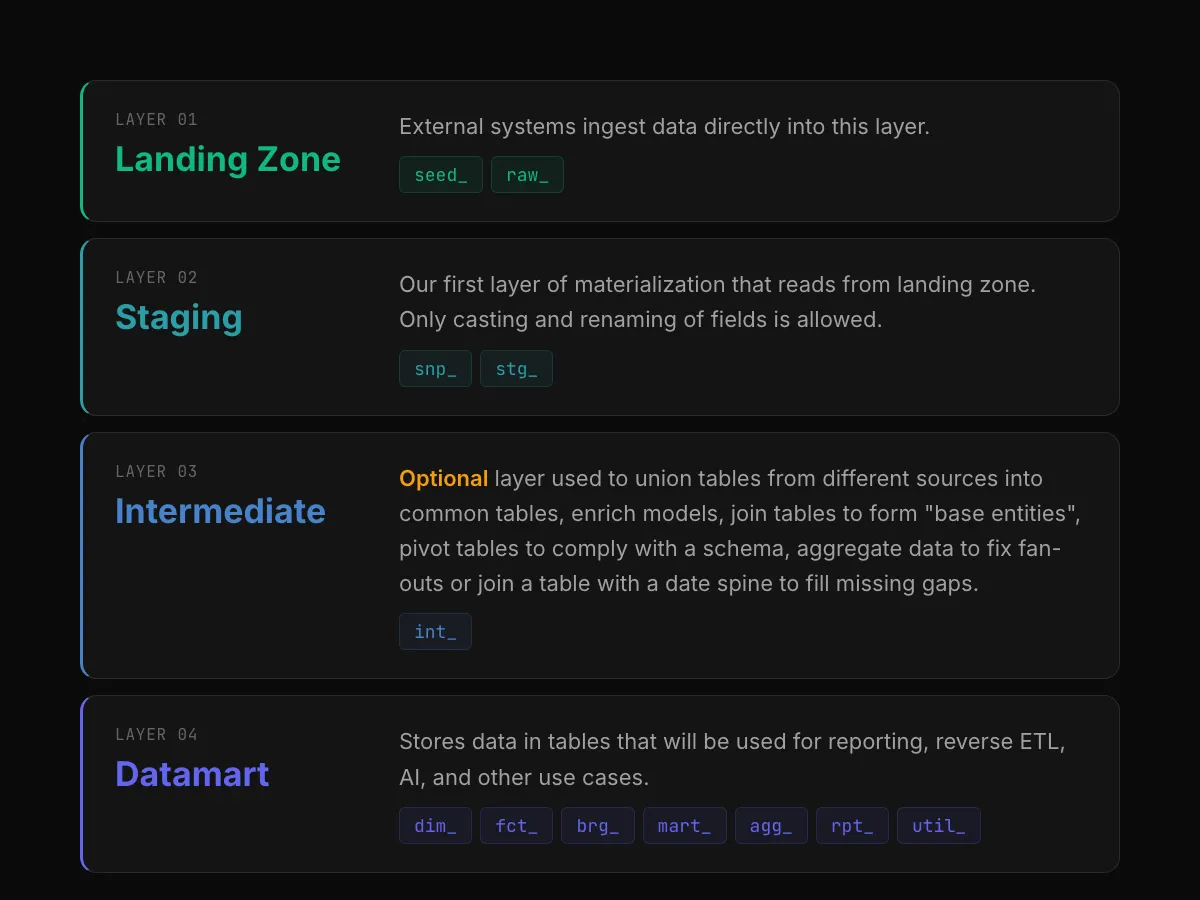

The paper suggests using “Semantic Views” to hide physical tables. While useful for security, this concept is architecturally insufficient for the Modern Data Stack.

Our industry has moved toward tools like dbt and Terraform because we value artifacts over scripts. We declare our state, version control it, and deploy it via CI/CD pipelines.

If we follow the paper’s implication of using imperative ALTER TABLE statements or ad-hoc scripts, we regress into Snippet Engineering. We cannot effectively version control 500 imperative ALTER statements scattered across time, and having a dependency chain. It breaks our CI/CD patterns and destroys our ability to audit the evolution of the truth.

The Monolith of Compromise

The In-Place approach (using ALTER TABLE to add semantics) assumes a unanimous business reality that rarely exists. In practice, Marketing, Finance, and Product often have conflicting definitions of the same entity.

Marketing defines an Active Customer as someone who visited the site in 30 days.

Finance defines an Active Customer as someone who paid an invoice in 30 days.

If we force both definitions into the physical customers table using the paper's proposed method, we create a Monolith of Compromise. The physical table becomes enlarged with conflicting logic (active_user_mktg, active_user_fin). The schema can become unreadable and unmanageable.

Every time a department wants to iterate on a metric, they must trigger a DDL change on a core production table. This tightly coupled architecture prevents localized experimentation and leads to a governance void. The ownership is unclear.

We need a higher-level abstraction. We must advocate for a new architectural entity that solves these ambiguities: the standalone Semantic Graph object.

The Architectural Solution: The Standalone Semantic Graph Object

To avoid the In-Place trap and the inevitable monolith of compromise, we must stop treating business logic as a mere attribute of physical storage.

Physical schemas are for storage; Semantic Graphs are for humans and Agents.

In the industry, we talk endlessly about “Data Products,” yet we struggle with the delivery mechanism. Is a Data Product a table? A dbt model? A Looker view? We lack a unified artifact that encapsulates the Data (Storage), the Topology (Relationships), and the Context (Governance).

I am advocating for a new architectural entity: the standalone Semantic Graph object. This could become the true Data Product, a native object in the database that separates the Producer (who manages raw data and partitioning) from the Consumer (who needs a governed, traversable business world).

Object Definition: Artifact over Patching

In a modern Data Team, tables are implementation details. They could never be exposed to the end user. The Semantic Graph could be the only exposed interface, in a way, the API of your data platform.

So, instead of patching tables with a laundry list of imperative ALTER commands, we define the Graph as a cohesive, version-controlled object. This object acts as a semantic projection over the storage.

Note: The previous pseudo-code illustrates the concept, I have used DDL syntax to align with the logic presented in the Google research paper.

However, this raises a valid question: Is DDL truly the right interface for defining business semantics? A Semantic Layer exists in the minds of business users, and forcing it into strict SQL syntax might be a barrier to adoption in itself.

Solving the Architectural Traps

By shifting from ALTER TABLE to CREATE SEMANTIC GRAPH, we resolve the major bottlenecks of the In-Place approach.

Encapsulating complexity

The consumer simply traverses orders.products.name the via the graph object same way the paper is proposing from tables. But here we does not care about from which table it comes from as we have a single graph entry-point pointing to different underlying tables. In addition, the complexity is contained within the object, not the table.

Decoupling truths (the anti-monolith)

We can finally acknowledge that different teams need different truths. Marketing can have a Marketing Graph, and Finance can have a Finance Graph. Both point to the same physical storage, but they project different semantic realities, avoiding the monolith of compromise.

Artifact engineering

We move from Snippet Engineering to versioned artifacts. We can deploy revenue_product_v2 alongside v1 without touching tables. This allows for safer, and faster experimentation without breaking production pipelines.

Native Governance

It includes the human metadata, owners, descriptions, and Golden Query patterns. As the object carries the metadata, we can easily see it tightly coupled with Dataplex, where lineage or data quality rules are attached to the graph, not just the raw tables.

Self-Service UI

This enables a “Graph View” over the data product directly into the GCP Console. Consumers can explore the domain visually in a single place rather than digging through raw SQL schemas and a full set of tables.

The Interface for the Agentic Ecosystem

Why does this architectural debate matter so much right now? Because while humans are the primary consumers of data today, AI Agents are the primary consumers of tomorrow.

The research paper pleasantly notes that “NL2SQL is focused on the wrong problem.” Generic LLMs fail at SQL not because they lack technical skill, they write syntactically perfect code, but because they lack context. For instance they often hallucinate relationships that does not exist or logic that defies business rules.

So when we connect the new BigQuery Data Agents directly to raw tables, we expose them to these contextual gaps immediately.

In contrast, a Semantic Graph Object acts as the instruction manual for the Agent. It provides a governed playground with strict guardrails and we have the graph as a system prompt.

Boundaries: The Agent cannot invent a join path. It can only traverse relationships explicitly defined as an

EDGEin the specialized graph, eliminating topological hallucinations.Instructions: The

ANNOTATIONmetadata (Synonyms, Metadata, Golden Query patterns, …) provide the necessary semantic definitions, telling the agent exactly what the data means before it ever attempts to write a query.

Conclusion

The Google research paper offers us an awesome future: a database engine that finally understands business topology. Features like Virtual Columns and Join Columns are the missing link that will allow us to retire unstable and verbose SQL scripts in favor of a robust, engine-native logic.

This research proves the engine is capable. I don’t think the industry is.

While these primitives are excellent building blocks, pushing business logic directly into raw tables is a governance nightmare waiting to happen.

To make this vision work at scale, we need a standalone Semantic Graph Object. We need a way to encapsulate these new features into governed, versioned interfaces that separate the producer’s implementation from the consumer’s experience.

This is the evolution of the Data Product.