Introduction

In today’s dynamic world of data engineering and analytics, the importance of securing and efficiently managing documentation and user interfaces is paramount. As data workflows become more complex, data teams face the dual challenge of ensuring both easy access and security for their data infrastructures.

This article presents an essential guide for data engineers and analytics professionals. We will explore a comprehensive strategy to fortify your dbt (data build tool) documentation and Elementary UI leveraging powerful features of Google Cloud products.

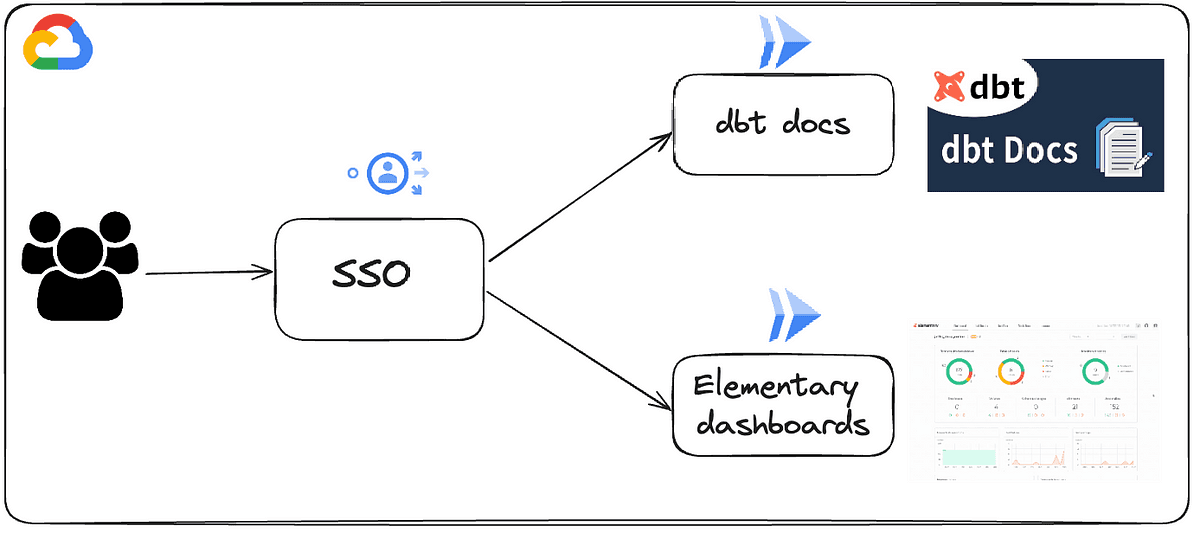

Focusing on the integration of Cloud Run and Identity-Aware Proxy (IAP), we aim to create a secure, scalable, and intuitive environment for hosting dbt docs and Elementary dashboards. Expect to learn how to enhance security without compromising on user experience, ensuring that your data infrastructure is both robust and user-friendly.

We will start this article by introducing some concepts regarding dbt docs and Elementary and then jump into technical implementations on how to host those artifacts in a secured, yet accessible manner.

Understanding dbt docs and their Importance

dbt (data build tool) plays a pivotal role in contemporary data engineering, serving as a bridge that transforms raw data into actionable insights. A standout feature of dbt is its documentation capabilities. dbt docs generate a comprehensive website that details your project’s documentation, including intricate model code, a Directed Acyclic Graph (DAG) illustrating your project’s workflow, column tests, and more. This documentation is not just surface level, it dives deep into your data warehouse, offering critical insights into column data types, table sizes, and more by querying the information schema.

By leveraging dbt docs, teams can benefit from an out-of-the-box data catalog that will improve collaboration between technical teams and business stakeholders.

Elementary Dashboard: A Glimpse into Data Health

Elementary stands as a reference package for observability of your dbt workloads. The Elementary dashboard acts as a dynamic visual representation of your data’s health and status. This dashboard is more than just a monitoring tool; it’s a comprehensive, user-centric interface that allows users to intuitively navigate and interpret complex data health indicators. Users can effortlessly view test results, assess the health of tables, and keep an eye on surveillance status.

What sets the Elementary dashboard apart is its ability to provide a high-level overview while also allowing for in-depth analysis. It provides a clear and intuitive understanding of model performance, data lineage, and overall data quality, making it an indispensable tool for maintaining the integrity and reliability of data projects.

Deploying with Cloud Run and Load Balancer

Both dbt docs and Elementary dashboards provide html artifacts that can be hosted locally but this is not sustainable for production deployments. To sort out this hosting issue, a solution is to host those artifacts on a GCS bucket and then proxy those files via a compute resource such as Cloud Run. To secure access to Cloud RUn, we then leverage IAP to ensure only authorized users can access the services. The following architecture depicts this solution:

Google Cloud Run stands out for its ability to run stateless containers, which is perfect for deploying web applications like dbt docs and Elementary UI. It provides the flexibility and ease of serverless architecture, allowing for automatic scaling and management based on traffic demands. Integrating Cloud Run with Google Cloud Load Balancer enhances this setup by distributing incoming traffic across multiple instances, ensuring high availability and consistent performance even during peak loads.

All resources on the previous architecture are deployed via Terraform (feel free to get in touch to have the code). This approach enables easy replication, version control, and maintenance of this infrastructure, ensuring that your deployment process is as efficient and error-free as possible.

In the following sections, we will dive deeper into the networking and security components of this architecture and outline the step-by-step process of setting it up.

Serverless Network Endpoint Groups (NEGs)

The foundation of our deployment process begins with the creation of Serverless Network Endpoint Groups (NEGs). In a nutshell NEGs act as a bridge between Load Balancers and serverless services like Cloud Run. They act as critical connectors in our architecture, enabling a seamless and efficient flow of internet traffic.

When we define NEGs for each Cloud Run service, we essentially map out a network route. This route directs requests from the Load Balancer to the appropriate Cloud Run instances. NEGs are responsible for ensuring that incoming requests are not just routed to the right service, but done so in a manner that optimizes load distribution and maximizes efficiency.

Efficient Traffic Management with URL Maps and Backend Services

For easier access, it’s likely you want to host dbt docs and Elementary dashboards on your domains and based on the url of dbt docs or the url of your Elementary Dashboard, you need to be redirected to the proper Cloud Run service. We leverage URL Maps and Backend Services to ensure this good network traffic dispatch from URL to the proper backend service. URL Maps in Google Cloud dictate how incoming requests are routed. They work by applying predefined rules that inspect the URL path and host headers of each request, ensuring that they are directed to the appropriate backend service.

This mechanism is particularly crucial when managing traffic for multiple services. For instance, in our setup, URL Maps enable us to define specific paths for dbt docs and Elementary UI, so that requests are seamlessly and accurately routed to the corresponding Cloud Run service. This level of control is not only beneficial for directing traffic efficiently but also plays a key role in enhancing the user experience.

Backend Services complement this by acting as the workhorses that handle these requests. Once a URL Map routes a request to a specific Backend Service, this service then processes the request, utilizing the assigned Serverless NEGs to communicate with the respective Cloud Run instances.

Below is a snippet of Terraform code to achieve this.

Integration of Identity-Aware Proxy (IAP) and IAM Policies

An important component of our deployment strategy is the integration of Identity-Aware Proxy (IAP), which serves as a guardian of security in this architecture. On the user side, this appears as a simple SSO login page.

The power of IAP lies in its ability to enforce secure access without the need for traditional VPNs or public-facing endpoints. It acts as a control plane, interposed between users and the Cloud Run services. When a user attempts to access the dbt documentation or Elementary UI, IAP steps in to verify their identity using Google’s robust authentication mechanisms. It doesn’t stop there; IAP also evaluates the user’s permissions based on predefined access policies, ensuring that only those with the right level of authorization can gain entry.

To be authorized to access the different Cloud Run backend services, the role “iap.httpsResourceAccessor” must be granted to the users (we recommend leveraging groups for easier management).

Some terraform snippets of code to grant access to users / groups to the different backend services

Conclusion

It’s one thing to host some services locally, it’s a totally different thing to host those services in a production-grade manner with proper networking and security. In this article, we deep dived into a fully-fledged implementation leveraging Google best-in-class products to host artifacts from dbt and Elementary packages.

If you are using dbt core and Elementary package, then it is of major importance that you allow your team members (technical and business) to access those precious UI with security and user-convenience as core pillars. Having tools that are safe to access in an easy way will only increase trust in your data ecosystem and put your company in a position to be more data-driven.

— — — — — — — — — — — — — — — —

If you enjoyed reading this article, stay tuned as we regularly publish technical articles on dbt, Google Cloud and how to secure those tools at best. Follow Astrafy on LinkedIn to be notified for the next article ;).

If you are looking for support on Data Stack or Google Cloud solutions, feel free to reach out to us at sales@astrafy.io.